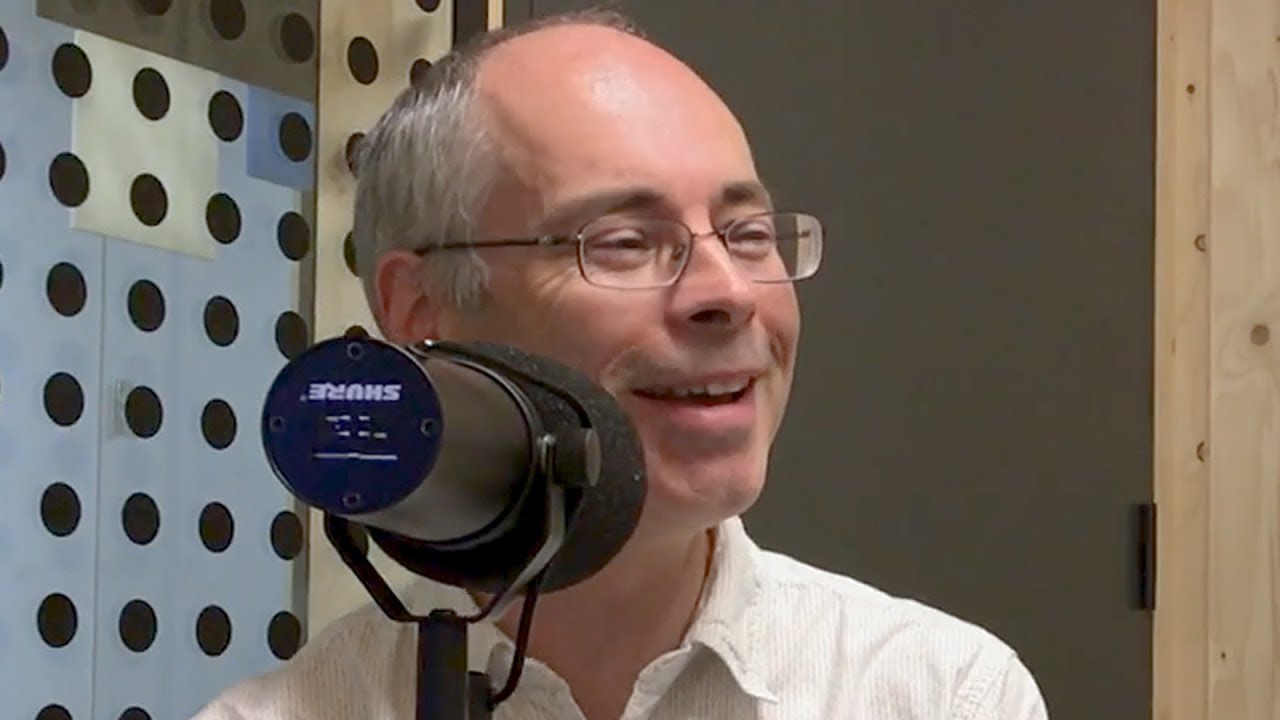

Ex Machina's Scientific Advisor - Murray Shanahan

by Y Combinator6/28/2017

Murray Shanahan was one of the scientific advisors on Ex Machina.

He’s also a Research Scientist at DeepMind and professor of Cognitive Robotics at Imperial College London.

His book Embodiment and the Inner Life served as inspiration for Alex Garland while he was writing the screenplay for Ex Machina.

Subscribe

iTunes

Google Play

Stitcher

SoundCloud

RSS

Transcript

Craig Cannon [00:00] – Hey, this is Craig Cannon and you’re listening to Y Combinator’s podcast. Today’s episode is of Murray Shanahan who is one of the scientific advisors on Ex Machina. Murray is a research scientist at DeepMind and Professor of Cognitive Robotics at Imperial College London. He’s also the author of several books one of which is called Embodiment and the Inner Life and it serves as inspiration for Alex Garland while he was writing the screenplay for Ex Machina. All right, here we go. I think the first question I wanted to ask you is that given the popularity of AI or at least the interest in AI right now, what was it like when you were doing your PhD thesis in the ’80s around AI?

Murray Shannon [00:35] – Well, very different. I mean, it’s quite a surprise for me to find myself in this current position where everyone is interested in what I’m doing. The media are interested. Corporations are interested. Certainly when I was a PhD student and when I was a young post-doc, it was a fairly niche area so you could just kind of like pave a way in your little corner doing things that you thought were intellectually interesting and being reasonably secure that you aren’t gonna be bothered by anybody. But it’s not like that anymore.

Craig Cannon [01:09] – What exactly was the subject matter at that time? What were you working on?

Murray Shannon [01:13] – At the time of my thesis?

Craig Cannon [01:15] – Yeah.

Murray Shannon [01:16] – I worked on how you could use… This is a tricky question. You’re asking me to go back. Let me think. What is it, like, 30 something years.

Craig Cannon [01:32] – Yeah.

Murray Shannon [01:32] – 30 something years, yeah. 30 years ago, I finished my thesis. Okay, so what did it look like? I was interested in logic programming and Prolog type languages. I’m always interested in how you could speed up answering queries in Prolog like languages by keeping kind of a record of a thread of relationships between facts and theorems that you’d already established. So instead of having to redo all the computations from scratch, it kind of kept a little collection of the relationships between properties that you’d already worked out so that you didn’t have to redo the same computations all over again. That was the main contribution of the thesis. I’m amazed I can remember. If you think about it.

Craig Cannon [02:17] – That’s very impressive. I did my thesis like five years ago and I barely remember it. Did you pursue that further?

Murray Shannon [02:25] – No, I didn’t. One other thing that I discussed in my thesis was I had a whole chapter on the frame problem. The frame problem is, there are different ways of characterizing it but the frame problem in its largest guise, is all about how a thinking mechanism or thinking creature or thinking machine, if you like, can work out what’s relevant and what’s not relevant to its ongoing cognitive processes, and how it isn’t overwhelmed by having to rule out just trivial things that aren’t irrelevant. That comes up in particular guise, when you’re using logic and when you’re using logic to think about actions and their effects. Then you wanna make sure that you don’t have to spend a lot of time thinking about the null effects of actions. For example, if I move around a bit of the equipment like your microphone here, then the color of the walls doesn’t change. You don’t wanna have to explicitly kind of think about all those kinds of trivial things. So that’s one aspect of the frame problem. But then more generally, it’s all about sort of circumscribing what is relevant to your current situation and what you need to think about and what isn’t.

Craig Cannon [03:44] – And so how did that translate to what folks are working on today?

Murray Shannon [03:48] – Well, so it’s actually… So this thing, the frame problem, has recurred throughout my career. So although there’s been a lot of variation in what I’ve done. So I worked for a long time in classical artificial intelligence which is there it’s all about, it was, and still is all about using logic like or sentence like representations of the world and you have mechanisms for reasoning about those sentences and a rule based approach. And so that approach of classical AI has fallen out of favor a little bit. And I sort of got a bit disillusioned with it back in, well a long time ago. So by kind of the turn of the millennium I’ve more or less abandoned classical AI ’cause I didn’t think it was moving towards what we now call AGI, Artificial General Intelligence, the big vision of human level AI. And so I thought well, I’m gonna study the brain instead. ‘Cause that’s the example that we have of an intelligent thinking thing. It’s the perfect example so I want to try and understand the brain a bit more. So I started working on building computational neuroscience models of the brain and thinking about the brain from a larger kind of perspective and thinking about consciousness and the architecture of the brain and big questions. And now, I’m getting around to answering your question by the way eventually but now, I’m interested in machine learning. There’s been this resurgence of interest in machine learning. So I’ve kind of moved back to some of my interests in artificial intelligence and I’m not thinking so much about the brain or neuroscience or that kind of empirical work right now and I’ve gone back to some of the old themes that I was interested in in good old fashioned AI, classical AI. So that’s sort of an interesting trajectory. Actually the frame problem, interestingly, has been a recurring theme throughout all of that stuff. And because it keeps on coming up in one guise or another. So in classical AI there was the question of how can you write out a set of sentences that represent the world where you don’t have to write out a load of sentences that encompass all the trivial things that are irrelevant. And somehow the brain seems to solve that as well. The brain seems to manage to focus and attends to only what’s relevant to the current situation and ignore all of the rest. And in contemporary machine learning there’s also this kind of issue as well. There’s also a challenge of being able to build systems, especially if you start to rehabilitate some of these ideas from symbolic AI. You want to think about how you can build systems that focus on what’s relevant in the current situation and ignore things that are not. For example, a lot of work here at DeepMind has been done with these Atari retro computer games. So if you think of a retro computer game, like Space Invaders, then if you think about the little invader going across the screen, it doesn’t really matter what color it is. In fact, it doesn’t really matter actually what shape it is either. What really matters is that it’s kind of dropping bombs. And you need to get out of the way of these things. So in a sense a really smart system would learn that it’s not the color that matters, it’s not the shape that matters, it’s these little shapes that fall out of the thing that matter. And so that’s all about kind of working out what’s relevant and what’s not relevant to solving, getting good score in the game.

Craig Cannon [07:28] – Sorry for the interruption everyone. We just got to see Garry Kasparov talk. It was pretty amazing.

Murray Shannon [07:33] – Yeah that was fantastic wasn’t it? So Garry Kasparov in conversation with Demis Hassabis. Yeah he gave a great talk about the history of computer chess and you know his famous match with Deep Blue. So yeah we just had to pop upstairs to watch that right and now it’s part two.

Craig Cannon [07:53] – Yeah kind of one of those once in a lifetime things. It also seems like he got out at the exact right time.

Murray Shannon [07:58] – Yeah maybe, yes he did, yeah yeah. Demis at the beginning of the interview said that he thought that he was perhaps the greatest chess player of all time. And so he was there just at the right time to be knocked out by a computer in a way, knocked off the top spot.

Craig Cannon [08:18] – Very cool yeah and he also said that, maybe accurately, that any iPhone chess player now is probably better than Deep Blue.

Murray Shannon [08:26] – Than Deep Blue was in 1997, yeah, which is interesting. I also thought it was interesting that he was saying that just anybody in their living room now can sit and watch two grandmasters playing a match and can use their computer to see as soon as they make a mistake and can analyze the match and can follow exactly what’s going on. Whereas in the past it took expert commentators sometimes days to figure out what was going on when two great players were playing. So that was interesting.

Craig Cannon [08:52] – What struck me was how he was kind of analyzing the current players and how they relied so heavily on the computer, or at least he thinks they rely so heavily on the computer, that they’re kind of reshaping their mind.

Murray Shannon [09:04] – Right yeah, and that’s certainly I think is gonna be true with Go and with AlphaGo. So it’s been interesting watching the reactions of the top Go players, like Lee Sedol and Ke Jie, who are very positive in a way about the impact of computers on the game of Go and they talk about how AlphaGo and programs like it can help them to explore parts of this universe of Go that they would never otherwise have been able to visit. And it’s really interesting to hear them speak that way.

Craig Cannon [09:44] – Yeah, it seems like they’re gonna open up just kind of new territories for new kinds of games to actually be created.

Murray Shannon [09:50] – Yeah, indeed yeah. So we’ve already seen that with AlphaGo in the match with Lee Sedol. So as you probably know there was a famous move in the second match against Lee Sedol. Move 37 where all the commentators sort of nine… masters were sort of saying oh this is a mistake, what’s AlphaGo doing, and this is very strange, and then they sort of gradually came to realize that this was a sort of revolutionary kind of tactic to put the stone in that particular rank in that particular time in the game. And since then, the top Go players have been exploring this kind of play, about moving into that sort of territory when the conventional wisdom was that you shouldn’t.

Craig Cannon [10:36] – Yeah I mean the augmentation in general I find fascinating across the board. And he was hinting that as well.

Murray Shannon [10:41] – Yeah he was yeah. So he was very positive about the prospects of human machine partnerships where humans provide maybe a creative element and machines can be more analytical and so on.

Craig Cannon [10:55] – What was that law that he mentioned? I forgot the name of it, I wrote it down.

Murray Shannon [10:58] – Oh Moravec’s Law. Yeah named after Hans Moravec, the roboticist who wrote some amazing books, including Mind Children. So he wrote this book called Mind Children. And this phrase mind children eludes to the possibility that we might create these artifacts that are like children of our mind and that they have sort of lives of their own and they are the children of our minds. And there’s a challenging idea. This is an old book coming from the late ’80s.

Craig Cannon [11:30] – Do you buy it?

Murray Shannon [11:35] – Maybe in the distant future.

Craig Cannon [11:38] – Okay, then maybe we ought to segue back into what we were talking about which is kind of related to your book, two books ago.

Murray Shannon [11:45] – Embodiment in the Inner Life, yeah. Yeah which came out in 2010.

Craig Cannon [11:50] – Because that was kind of an integral question to the movie Ex Machina, right. Because you didn’t necessarily have to have a person-like AI. And more importantly you didn’t have to have an AI that sort of looked like a person that sort of looked like an attractive female that also looked like a robot. Right, they tee it up in the beginning. Nathan tees it up in the beginning.

Murray Shannon [12:13] – I mean obviously to a certain extent those are things that make for good film. And so there are artistic choices and cinematographic choices. And I mean in the film Her we actually have of course a disembodied AI and so it’s possible to make a film out of disembodied intelligence as well. But obviously a lot of the plot and what drives the plot forward in Ex Machina is to do evade embodiment and the fact that Caleb is attracted to her and sympathizes and empathizes with her. But there’s also kind of a philosophical side to it too which is certainly I think that it will no doubt, when it comes to human intelligence and human consciousness, our physical embodiment is a huge part of that. It’s where our intelligence originates from because what our brains are really here to do is to help us to navigate and manipulate this complex world of objects in 3D space. So our embodiment is an essential factor here. We’ve got these hands that we use to manipulate objects and we’ve got legs that enable us to move around in complicated spaces and so that in a sense is what our brain’s originally for. The biological brain is there to make for smarter movement. And all of the rest of intelligence is a flowering out of that in a way.

Craig Cannon [13:50] – And so did you buy the gel that he showed Caleb in the beginning?

Murray Shannon [13:54] – It’s interesting because the way the film is constructed is that… So Alex Garland, you know the writer and director, so he sometimes says that the film is set 10 minutes into the future. It’s like really meant to be like really a lot like our world, just very slightly into the future. And so when you see the Nathan’s lair, his sort of retreat in the wilderness, there’s nothing particularly science fictiony about that. It’s designer-ish and of course it is in fact a real hotel. You can actually go and stay in this place.

Craig Cannon [14:30] – Really, where is it?

Murray Shannon [14:32] – In Norway. And so you know, it doesn’t have a particularly futuristic feel. And almost everything you see is not very futuristic. It’s not like Star Wars. But then there are few things. A few carefully chosen things that look very futuristic and Ava’s bodies are the way you can see sort of the insides of her torso and her head. And then when he shows the brain, which is made of this gel. And so I think that was a good choice because we don’t at the moment know how to make things that are like Ava, that have that kind of level of artificial intelligence. So that’s the point at which you have to go sci-fi really.

Craig Cannon [15:17] – Well I mean those like life-like melding elements, have you watched the new show, the HBO show? Is it Westworld?

Murray Shannon [15:24] – Do you know I haven’t. No, I mean I yeah. It really is on my to-watch list. But I’ve heard a lot about it yeah. I remember the original with Yul Brynner but I haven’t watched the series, the current series. Yeah yeah.

Craig Cannon [15:40] – They definitely take cues. I mean I guess it’s probably like in the sci-fi canon that you have this basement lair where you create the robots and then they become lifelike through this whole process. Even if you just watch kind of the opening title credits, it’s exactly that. It’s like the 3D printed sinews of the muscles. It looks exactly like Nathan’s lair and so what I was wondering is as you were consulting on the show, how much of that were they asking you about and were they saying like, is this remotely ten minutes in the future? Or is this 50 years in the future?

Murray Shannon [16:12] – Well it wasn’t really like that actually. I mean I’ll tell you the sort of whole story of how the kind of collaboration came about. So I got this email from Alex Garland. You know, unsolicited email out of the blue. It’s the kind of unsolicited email you really want to get from you know famous writer, director who wants you work on a science fiction film. And he basically said oh I read your book Embodiment and the Inner Life and it helped me to kind of crystallize some of the ideas about around this script that I’m writing for a film about AI and consciousness. And do you want to get together and have a chat about it? So I didn’t have to think very hard about that. So we got together and had lunch and he sent me the script. So I’d read through the script by the time I got to see him. And he certainly wanted to know whether it sort of felt right from the standpoint of somebody working in the field. And I have to say it really did. There was nothing… As a script it was a great page-turner actually. It’s interesting being in that position because now, Ex Machina and the image of Ava has become kind of iconic and you see it everywhere. But of course when I read the script, all of that imagery didn’t exist and so I was reading it. I had to kind of conjure it up in my head and so…

Craig Cannon [17:41] – So he didn’t give you any kind of preview of what he was thinking aside from text?

Murray Shannon [17:45] – No because nobody had been cast then at that point. I think actually when we met up, if my memory serves me right, he did have a few, he did have some images or some mock-ups from artists of what Ava might look like. But I hadn’t seen it when I read the script so for me it was just kind of the script. And the characters really leapt off the page. The character of Nathan in particular was really very vivid. And you really didn’t like this guy, just reading the script. Anyway so, so then Alex really wanted to… So I sort of grabbed the title of Scientific Advisor. I’m not sure if I ever really was officially a Scientific Advisor but Alex really wanted to meet up and talk about these ideas. He wanted to talk about consciousness and about AI and so we met up several times during the course of the filming. And I think there’s very little that I contributed to the film at that point. In a sense perhaps I’d already done my main bit by writing a book. I mean there were a few little phrases that I corrected. Tiny, tiny things. But otherwise I just thought you know, great. Really very good. There are some lines in the film that I just thought were so spot on.

Craig Cannon [19:12] – Anything you remember in… Like what line in particular?

Murray Shannon [19:15] – Yeah so a favorite one is where… So initially Caleb is told that he’s there to be the human component in a Turing test. And of course it isn’t a Turing test. You know and Caleb says that pretty quickly. He says well look, in a real Turing test, the judge doesn’t see whether it’s a human or a machine. And of course I can see that Ava’s a robot. And Nathan says “Oh yeah, we’re way past that. The whole point here is to show you that she’s a robot and see if you still feel she has consciousness.” And I thought that that was so spot on. I thought was an excellent, really an excellent point, making a very important philosophical point in this one little line in the middle of a psychological thriller which is pretty cool. So I call that the Garland test.

Craig Cannon [20:05] – I found it very, like yeah that was really astute. I was wondering like which text influenced him most when he was writing it. And in particular like where you found that your work had seeds planted throughout the movie. Where do you think it was most influential?

Murray Shannon [20:21] – Well, good question. You’ll need to ask him.

Craig Cannon [20:25] – Yeah, maybe.

Murray Shannon [20:30] – So my book is very heavily influenced by Wittgenstein. And in a sense, Wittgenstein is all about, when it comes to these deep, philosophical questions, it’s very, in a sense it’s very down to earth. It’s always saying, well what do we mean by consciousness and intentionality and all these kind of big difficult words. And Wittgenstein is always taking a step back and saying well what are the role of these words in ordinary life? And the role of these words in ordinary life with something like consciousness is all to do with you know the actual behavior of the people we see in front of us. And so you know, in a sense I judge others. Well I don’t actually go around judging others as conscious. That’s the point that he would make as well. I just naturally treat them as conscious. And so why do I naturally treat them as conscious? Because their behavior is such that they’re just like fellow creatures and I that’s just what you do when you encounter a fellow creature. You don’t think carefully about it. And this is an important Wittgenstein-ian point that I bring out in the book very much. And in a sense that’s very much what happens to Caleb. So Caleb isn’t sort of sitting, making notes saying, therefore she is conscious. But rather, through interacting with her he just gradually comes to feel that she is conscious and to start treating her as conscious. And so that’s a very, there’s something very Wittgenstein-ian about that. And I think probably that comes from, I’d like to think that comes from my book to an extent.

Craig Cannon [21:59] – Well I had never… It seems very cinematic that it would be like over the course of a week, the Turing test. But I had never seen a Turing test framed that way. I guess it’s not a… You know it’s a Garland test. But did you coach him in any way of like that natural steps that someone would take as the test elevates?

Murray Shannon [22:18] – No, not at all. No, this is all Alex Garland’s stuff. I had no input on that side at all. So the script was already, and the plot was already, the whole script was already 95% done when I first saw it. So there were a few differences in the final film from what you see in the script that I saw. And indeed in the published.

Craig Cannon [22:45] – So that was a question from Twitter. This is kind of a seemingly a pseudonym on Twitter. Someone, @TrenchShovel. They ask, “Were there any parts of the script that were changed or left out because they weren’t technically feasible or realistic?”

Murray Shannon [23:01] – Well, so there was a bit in the script that was left out in the final filming, which I think is very significant. And so spoilers ahead for the few people. I assume if you’re listening to this you’ve seen the film.

Craig Cannon [23:17] – Hopefully.

Murray Shannon [23:18] – So right at the end of the film where Ava is climbing in into the helicopter having escaped from the compound and she’s about to kind of fly off and we see her have a few words with the helicopter pilot and I wonder what she says actually. You know that’s interesting. Just fly me away from here. And then off the helicopter goes. Now in the written script there’s an instruction there which says something along the lines, we see waveforms and we see facial recognition vectors fluttering across the screen and we see this, that, and the other. And it’s utterly alien. This is how Ava sees the world. It’s utterly alien. And now in the end… So the very first version of the film that I saw was long before all the, it was before all the VFX had been properly done and everything so it was a first, crude cut. And they put sort of this scene in. And they put a little bit of these kind of visual effects in. And then I think they decided this didn’t really work terribly well to do that at that point. So they kind of cut it out, so. In the version that we see, you don’t actually see that. You just see her speaking to the helicopter pilot as she climbs into the helicopter. But it’s a very significant direction because you know we’re left, I think one of the great strengths of the film is that it leaves so many unanswered questions. You’re left thinking, is she really conscious, you know. Is she really capable of suffering? Is she just a kind of machine that’s gone horribly wrong or is she a person who’s understandably had to commit this act of violence in order to save herself. Which of these is it? And you never really quite know. Although I think people are leaning more towards the kind of oh she’s conscious in a straightforward kind of way. But that version of the ending just points to the fact that there’s a real ambiguity there. Because if that had been shown you might be leaning more the other way. You might be thinking gosh, this is a very alien creature indeed. And she still might be really, genuinely conscious, and generally capable of suffering. But it would really throw open the kind of question, you know how alien is she?

Craig Cannon [25:48] – To me that would also… So just so I understand, it was a VFX over the actual image, right?

Murray Shannon [25:54] – Well in the script it doesn’t specify exactly how it would be done. So it just says something like we see facial recognition vectors fluttering and I can’t remember the exact words. But it was… obviously the idea was to give an impression of what things looked like and sounded like for Ava in some sense. Which of course in a sense is impossible to convey but you just have to… I think maybe that’s why they thought how would we do this?

Craig Cannon [26:26] – Well I didn’t know if they were also trying to avoid some kind of, I guess it’s not really like a fourth wall, but it’s also trying to avoid the situation where the author, Alex, or author of the movie, is saying like we’re in a simulation. Like this is what you’re seeing as you are the mind of an artificial intelligence.

Murray Shannon [26:45] – Yes well I think it was meant to be shown from her point of view. So that wouldn’t have been an interpretation of it if they’d got it right I would imagine. I don’t know why exactly they decided not to put it in. But it’s just the fact that that direction is there in the script. And by the way, that’s in the published script. So I’m not giving anything away here. But the published version of the script has this little direction in it.

Craig Cannon [27:11] – Yeah I re watched it. Yeah I re watched it last night and I remembered the ending. It’s so vague. It’s so vague what happens.

Murray Shannon [27:19] – I do remember. ‘Cause I quite like the ambiguity, you know of where you don’t really know really. Is she conscious at all? Is she conscious just like we are? Is she conscious in some kind of weird alien way. You never really know. And this is a deep philosophical question. And there’s also a moment where, right at the end where she’s coming down the stairs, having escaped basically. She’s coming down the stairs, at the top floor of Nathan’s compound, and she smiles.

Craig Cannon [27:48] – She does that, she goes up the stairs, kind of looks back and surveys and she smiles.

Murray Shannon [27:52] – Yes yes and she smiles. And I remember saying to Alex, I don’t think you should have that, after I’d seen the first version. I said I don’t think you should have that smile there because it’s too human. And you know and he was really thought it was important to have the smile there. Because I think he would say, so I think Alex, I don’t want to put words in his mouth, so I apologize to Alex if he’s listening to this. But I think he would say that people of course can have their own interpretations. And that’s of course you know. But he would probably lean towards the interpretation that she is conscious in the way that we are. And the evidence for that is well why would anybody smile to themselves privately if they weren’t conscious just like we are?

Craig Cannon [28:39] – What else in those conversations, you know you’re watching edits of the movie, what else did you guys work through?

Murray Shannon [28:45] – Well, so there’s the Easter Egg.

Craig Cannon [28:49] – Yeah, that’s a good one.

Murray Shannon [28:50] – Should I tell you the story of the Easter Egg? Yeah, so the first time I saw any, you know any kind of clip of Ex Machina, as Alex sent me an email and said well do you want to come in and see a bit of Ex Machina. It’s in the can as these film people say. Though there are no cans anymore for the film to go in. So come and see a kind of like, you know come to the cutting room. So I went along and he showed me some scenes and at one point he kind of stopped the machine and he said and this is the moment where Caleb is reprogramming the security system in order to release all the locks to try and get out. And so Alex froze the frame there and said ah right. Now you see these computer screens where Caleb is typing to these computer screens. And he said do you see this window here? Now this window, it’s all filled with some kind of some junk code at the moment. And he says, but you can be sure there are gonna be some geeky types out there who the moment this thing comes out on a DVD, they’re gonna freeze that frame and they’re gonna say what does this do? So let’s give them an Easter Egg. He said let’s give them a little surprise. So he said that basically that window is yours. Put something in there and some kind of hidden message and he said maybe make it an allusion to your book. So I went, so I thought this is very cool. This is the best product placement ever. I’ve probably sold one other copy thanks to that little point. So I went home that evening and I made the mistake that evening of buying a bottle of sake and I was drinking this sake. I said well what am I gonna do for this? And I got down coding something up in Python and I was having a good laugh at what I was gonna do. So I thought okay, it’s gotta be vaguely kind of to do with security. So an encryption. So let’s have something that kind of has some primes in it. So I wrote this little piece of… The Sieve of Eratosthenes, a classic way of computing primes. And I wrote this sort of kind of getting it off Wikipedia or something. I sat there and coded it myself after four glasses of sake. And I was coding this thing up. And then basically computes a big array of prime numbers and then there’s this thing that indexes into the prime numbers and then adds some random looking other numbers to the numbers that it’s… And then those are ASCII characters and then it prints out what those ASCII characters actually look like. So when you look at this code on the screen it’s just gobbledygook but something to do with prime numbers. If you run it, it prints out ISBN equals, and the ISBN of my book Embodiment and the Inner Life. Anyway so that was very exciting… So I was very please with this and I handed it over to them and they put it in the thing. But I have to say Alex was wrong. It wasn’t when the DVD came out. Oh no, it only had to be on BitTorrent for 24 hours, long before the DVD came out, before there was pages about this thing on the internet. There was a whole reddit thread. There’s a github repository with my piece of code. And the reddit thread includes a whole lot of criticism about my coding style. It’s not PEP-8 compliant. It’s true, it’s really true. But what I really regret was that the loop, I put the wrong terminating condition on a loop. You know you can terminate the the Sieve of Eratosthenes after n squared. You don’t have to all the way to n over two. But for some reason I wasn’t paying attention, four glasses of sake, and I put you know, it terminates after n over two. It’s inefficient.

Craig Cannon [32:38] – We’ll let it slide. Maybe that’s the bug in her code and it will always be broken.

Murray Shannon [32:44] – Well it’s not a bug. Give me some credit. You know it’s not actually a bug. It does meet the specification.

Craig Cannon [32:50] – Fair enough.

Murray Shannon [32:51] – But it’s not efficient.

Craig Cannon [32:52] – We should ask some of these questions from Twitter. I know people are very excited to ask you a question. So we already asked one so… Patrick Atwater (@patwater), let’s get to his question. So, “Craig, ask how much closer we are to the sort of general Hollywood style AI now than we were in the ’50s?”

Murray Shannon [33:13] – In the ’50s.

Craig Cannon [33:14] – So I think what he’s eluding to is the flying car the pastel version. Like it’s kind of the crazy futuristic version of the AI in the ’50s. And then the AI that they’re portraying in the movie.

Murray Shannon [33:27] – Well I can tell you that we’re precisely 60 years closer than we were in the ’50s. But I don’t think that’s the kind of answer that…

Craig Cannon [33:33] – No, we can tweet him that.

Murray Shannon [33:35] – Well of course you have to remember that in Ex Machina as in all films, the way that AI is portrayed really a lot of it is to do with making a good film, and making a good story, and in particular people love stories where the AI is some kind of enemy nemesis and so on. Actually, Garry Kasparov who we just heard speak, made a very interesting point didn’t he about this. He pointed out and I think he’s right that there’s been a kind of change from very positive views in science fiction of utopian views where we’re gonna kind of get to the stars to more dystopian views of things where it’s like the Terminator and so on. But anyway, certainly makes for a good story if your AI is bad. And it also makes for a good story if your AI is embodied and if your AI is very human-like. Whereas in reality, AI insofar as it’s gonna get more and more sophisticated and closer and closer to human level intelligence, it’s not necessarily going to be human-like. So it’s not necessarily going to be embodied in robotic form. Or if it is embodied in robotic form, it might not be in humanoid form. So in a sense a self-driving car is a kind of robot.

Craig Cannon [34:59] – Perfect, yep.

Murray Shannon [35:01] – So I think that things, you know will be a bit different from the way that Hollywood has portrayed them. Of course if you go back to the ’50s, it’s very interesting to look at retro science fiction. I love retro science fiction. You know ’cause something like the Forbidden Planet, then Robby, of course in the Forbidden Planet, is this metal hunk thing. You know which is completely impractical. And you think how would it get around at all? And how would it do anything with these kind of claw arms and hands that it’s got? So clearly we’ve changed a lot in our view of the kinds of bodies we think that we might be able to make.

Craig Cannon [35:42] – I think it’s also quite difficult because there’s not really a clear benchmarking happening right now. ‘Cause it’s not obvious. If it was just like, you know energy and compute going into this, then the race would be, I mean it wouldn’t be over, but it would be very obvious as who’s winning and what’s going on. It seems to me that there are clear breakthroughs that have to happen.

Murray Shannon [36:01] – Yeah, that’s certainly my view. So if we’re thinking about now the question of when might we get to human level AI, or artificial general intelligence then, I think we really don’t know. And certainly some people can, you can draw graphs that extrapolate computing power and the sort of the how fast the world’s fastest super computers are. And you know we’re pretty close to, well depending on how you calculate it, we’re pretty close to human brain scale computing already in the world’s fastest super computers. We’ll get there within the next couple of years. But that doesn’t mean to say we know how to build human level intelligence. That’s an altogether different thing. And also there’s controversy about how you make that calculation as well. I mean do you, what do you count? Do you count a neuron? How do you count the computational power of one neuron? Or one synapse? And some people, you know, it may be that some of the immense complexity in the synapse is functionally irrelevant. You know if it’s chemically important in some, but it might be functionally irrelevant to commission. So there are a lot of open questions there. But even if we allow kind of conservative estimate and we assume that we’re gonna have enough computing power that’s equivalent, the computing power that’s equivalent to that of the human brain by say 2022 or something, or 2020, yeah we still would need to understand exactly how to use all of that computing power to realize intelligence. And I think there are probably an unknown number of conceptual breakthroughs between here and there.

Craig Cannon [37:39] – I mean specific AI absolutely happening but just general AI that he’s talking about, yeah.

Murray Shannon [37:44] – Yeah, exactly, so. Yeah, so clearly there’s lots of specialists, artificial intelligence where we’re getting really good at things like image recognition and image understanding and speech. So speech recognition is more or less been cracked. They’re just the process of turning the raw waveform into text. So that’s been cracked. But then again, real understanding of the words, that’s a whole other story. And with all today’s personal assistants, you know it can be cool and they’re gonna get better and better, they’re still way off displaying any genuine understanding of the words that are being used. I think that will happen in due course but we’re not quite there yet.

Craig Cannon [38:30] – I mean fortunately or unfortunately. ‘Cause that also, that underlies one of the other questions that I did want to ask. So this is from, @meka_floss on Twitter. So their question is, “Excellent movie but why is Asimov’s Law forgotten?” that would be the absolute first thing they asked. So just for people who don’t know what that is, there are three laws of robotics, right. So I wrote these down. So a robot may not injure a human being or through inaction allow a human being to come to harm. That’s the first one. Two, a robot must obey orders given to it by human beings except where such orders would conflict with the first law. And then the third law is a robot must protect its own existence as long as such protection does not conflict with the first or second law. And so their point is basically like you know why is the first law broken in Ex Machina.

Murray Shannon [39:23] – Well of course Asimov’s Laws are themselves the product of science fiction. So they’re not real laws.

Craig Cannon [39:29] – We should make that clear.

Murray Shannon [39:33] – So Asimov wrote those laws down in order to make for great science fiction stories. And all of the science fiction stories, Asimov stories, are you know center on the ambiguities and the difficulties of interpreting those laws or realizing them in actual machines. And the kind of often the sort of moral dilemmas as it were that the robot is faced with in trying to uphold those laws. So even if we did suppose that we wanted to somehow put something like those laws into… I mean it’s not relevant to robotics today. But if we did want to put them into a robot, it would be immensely difficult. So I should take a step back and say why is it irrelevant to robotics today? So of course, let me qualify that. Of course there are people who want to build autonomous weapons and all kinds of things like that and you might say to yourself well I would very much like it if somebody was trying to pay attention to things a bit like Asimov’s Laws and say well you know, you shouldn’t build a robot that is capable of killing people. But that’s a law that the designers, or that would be a principle, if we were to have it, that the designers and engineers would be exercising, not one that the robot itself was exercising. So that’s the sense in which it’s not relevant toady because we don’t know how to today make an AI that is capable of even comprehending those laws. So that’s kind of the first point. So why doesn’t… Okay, but when we’re thinking about the future, of course this is in Ex Machina, so why not? Well it would obviously again make a very different story if Asimov’s Laws were put into Ava. But let’s suppose that it was a world where we were minded to put Asimov’s Laws into Ava. Well maybe Ava might reason that she is human. You know what is the difference between herself and a human? And maybe she would reason that she shouldn’t allow herself to come to harm. And therefore she was justified in what she was doing. Who knows? I mean it’s just a story, right. I think we have to remember that it’s just a story. And it’s actually very important. I think science fiction is really, really, good at making us think about the issues. But at the same time we always have to remember that they’re just stories, that there’s a difference between fantasy and reality.

Craig Cannon [42:10] – And I think it’s also, it is also kind of covered in the movie when Nathan and Caleb are debating. I think he, Nathan’s criticizing Caleb over going with his like gut reaction and his ego and not in like if he were to think through every logical possibility for every action he would never do anything. Which is kind of like directly against all these laws. Ava would never do anything if she could harm someone possibly down the road by you know burning fossil fuel, by being in a helicopter.

Murray Shannon [42:41] – I mean I guess we’re all, we all have to confront those sorts of dilemmas all the time. And indeed, you know moral philosophers have got plenty of examples of these kinds of dilemmas that make it obvious that there’s no simple, single rule really is enough by itself. Trolley problems if you know where you know the trolley is heading down the track and there are points and for some unknown reason somebody has tied across the tracks on one fork

Craig Cannon [43:14] – It’s very cinematic.

Murray Shannon [43:17] – It’s very cinematic and on the other fork, three people are tied across the tracks. And the points are currently such that the three people, the trolley is gonna go over the three people and kill them. And you are faced with the possibility of changing the points so that the trolley goes down the first track and kills only one person. So what do you do? And philosophers can spend entire conferences debating what the answer to this is and thinking of variations and so on. And that little problem, that little thought experiment, fill it with thought experiment there is a distillation of much more complex moral dilemmas that exist in the real world.

Craig Cannon [44:00] – Absolutely. So before we go, I do want to talk about your thoughts on broader things. You know obviously you work here.

Murray Shannon [44:07] – We haven’t been broad enough.

Craig Cannon [44:09] – More like broader things than Ex Machina. So obviously you’re here at DeepMind. You’re at Imperial as well, 20% of the time. Can you talk a little bit about things you’re excited about for the future as it relates to what you’re working on?

Murray Shannon [44:24] – Well, so I’ve recently got very interested in deep reinforcement learning. So deep reinforcement learning is one of those things that DeepMind has made famous really. So when they published this paper back in 2014 and the next version in 2015. So they published this paper about a system that could learn to play these retro Atari games completely from scratch. So all the system sees is just the screen. Just the pixels on the screen. It’s got no idea what objects are present in the game. It just sees raw pixels and it sees the score. And it has to learn by trial and error how to get a good score. And they managed to produce this system which is capable of learning a huge number of these Atari games completely from scratch and getting in some cases super human level performance. In other cases, human level performance, and in some cases it wasn’t too good at the games. And so I think that opened up a whole new field. And to my mind that, so that system is called DQN. And to my mind, DQN is in a sense one of the very first general intelligences because it learns completely from scratch. You can throw a whole variety of problems at it. You know it doesn’t always do that that well. But in many cases it does pretty well. So to answer your question. So I’ve got very interested in this field of deep reinforcement learning but when I sort of first, you know long before I joined DeepMind, I first started playing with a DQN system when they made the source code public, and I pretty quickly realized that it’s got quite a lot of shortcomings as well, as today’s deep reinforcement learning systems all have, which is it is very, very slow at learning for a start. When you watch it learning you think actually this thing is really stupid. Because it might get to super human performance eventually but my goodness it takes a long time to do.

Craig Cannon [46:28] – Even just playing Space Invaders.

Murray Shannon [46:30] – Yeah or even Pong or something simpler than that. So it takes an awful long time to do it whereas a human very quickly is able to work out some general principles. What are the objects? What are the sort of rules of… And you work it out really quickly. And so it made me think about my ancient past in classical artificial intelligence, symbolic AI, and it made me realize that there were various ideas from symbolic AI that could be rehabilitated and put into deep reinforcement learning systems in a more modern guise. And so that’s the kind of thing that I’m most interested in right now.

Craig Cannon [47:07] – Very cool. Yeah that was actually one of my favorite questions from the Kasparov talk today. Someone who’s working on Go asked exactly that. Like how can humans compute so quickly. They compute what is not relevant to the game and they execute the game. I guess it was chess right in 50 moves rather than 100 moves. And it’s very much that framing.

Murray Shannon [47:29] – Yeah that was Toray, Toray who’s one of the people on the AlphaGo team. And yeah that’s a very deep question I think he was asking there.

Craig Cannon [47:39] – Yeah, it’s fascinating. Cool, so if someone wants to learn more about you or more about the field in general, what would you recommend?

Murray Shannon [47:47] – Well if they want to go learn more about me I can’t think why they would want to but if they did they can Google my name and find my website. If they want to learn more about the field in general, well we’re in a very fortunate position of having an awful lot of material out there on the internet these days that people can find and all kinds of lectures and TED talks and TEDx talks and so on. If people want to know a bit more technical detail there are some excellent tutorials about deep learning and so on out there that people can find. There are lots of massive MOOCs, Massive Online Open Courses. So there’s a huge amount of material on the internet out there.

Craig Cannon [48:26] – Do you have a budding career in technical advising or is there Ex Machina 2?

Murray Shannon [48:32] – So people often ask me about Ex Machina 2 which of course is none of my business. But whenever I’ve heard Alex Garland asked about that, he always says he’s got no intention of producing an Ex Machina 2, that it was a one off. As for scientific advising, yeah so I have been involved in a few other kind of projects. Like there was a theater project I was involved in I enjoyed with Nick Payne at the Donmar Warehouse here.

Craig Cannon [49:03] – What’s that called?

Murray Shannon [49:04] – So this play by Nick Payne was called Elegy and it’s about an elderly couple where one of them has got a dementia like disease and it’s set also sort of ten minutes into the future. One of them has got a dementia like disease but techniques have been developed whereby these diseases can be cured but the cost that you have to pay is that you lose a lot of your memories. And so the play really centers on the difficulties for the partner knowing that her partner’s memories of their first meetings and so on and their love is gonna actually sort of vanish. So it was about that and it was more of a neuroscience kind of stuff. But I’ve also been involved with an artistic collective called Random International. And Random International do some amazingly cool stuff. So I highly recommend Googling that. And so they were famous for this thing called Rain Room.

Craig Cannon [50:13] – At MoMA in New York?

Murray Shannon [50:15] – Yes, that’s right exactly. So it tours. But it was in MoMA. It was over in New York indeed, yeah. So the idea there is that, since all their art is using technology in various kind of interesting ways and often about how we interact with technology to make kind of art. So in Rain Room, the idea is that it’s a room with sprinklers up on the ceiling and you walk around in this room and it’s raining everywhere but there’s some clever technology that senses where you are.

Craig Cannon [50:45] – And you worked on that?

Murray Shannon [50:46] – No, no I didn’t. I should finish but, so there’s some clever technology that senses where you are and turns off the sprinklers immediately above your head. So you walk around in this room miraculously never getting wet. So that’s one of the things. They also worked on this amazing sculpture called 15 points. And this is based on point light displays. So a point light display is one of these little displays where on the screen you’ve just got say 15 dots. And these 15 dots move around and you suddenly you see that it’s a person. ‘Cause the 15 dots are like the elbow joints and the neck and the head and the torso and the knees and so on and you see these things moving around and you instantly interpret it as motion. You could even tell whether the person is run or walking or digging or often whether it’s even whether it’s a man or a woman just by these 15 points moving. So they constructed this beautiful sculpture which has these sort of rods that have little lights on the end, rods and motors, and so it’s very much a piece of mechanics, mechanical, robotic like mechanical thing. And when you just see it stationary it’s just like this weird kind of contraption. But then it starts moving and all the lights on the end and then you suddenly see there’s this person appears walking towards you. And I thought that was a wonderful example of how we see. We see someone there when there isn’t. And of course for me that was very interesting because it made me think about when we do that with machines. We often, we do maybe, we think that there’s someone at home when there isn’t. So all of their art is all about those kinds of questions.

Craig Cannon [52:40] – That’s so cool. I think that’s a perfect place to end it. And we’ll link up to all their work as well.

Murray Shannon [52:44] – Okay, great.

Craig Cannon [52:45] – All right, thanks Murray.

Murray Shannon [52:45] – Yeah, sure, thank you.

Craig Cannon [52:47] – All right, thanks for listening. So if you want to watch the video or read the transcript from this episode, you can check out blog.ycombinator.com and as always please remember to subscribe and rate the show. Okay, see you next time.

Other Posts

Author

Y Combinator

Y Combinator created a new model for funding early stage startups. Twice a year we invest a small amount of money ($150k) in a large number of startups (recently 200). The startups move to Silicon